Artificial intelligence is no longer just sci-fi

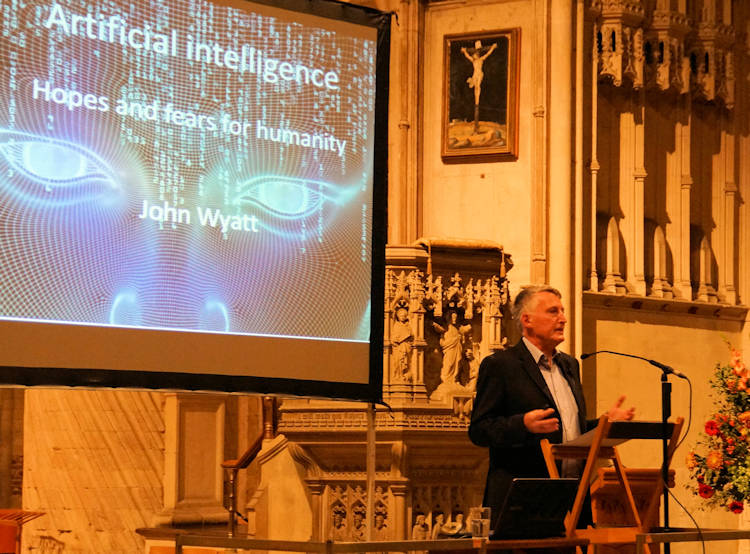

At last week's Science and Faith Lecture at Norwich Anglican Cathedral, Professor John Wyatt discussed artificial intelligence (AI) and its effects on humanity. Mark Sims reflects on what he said.

Near the start of his presentation, Prof Wyatt cited Philip K Dick's novella 'Do Androids Dream Of Electric Sheep' and its film adaptation, 'Blade Runner' directed by Ridley Scott, as examples of stories involving AI, in the form of robots, or 'Replicants' in the film's parlance.

In Scott's film, Replicants are androids that are virtually identical humans, yet physically and intellectually, yet not emotionally, superior. 'More human than human' is the slogan for the Tyrell Corporation, which produces Replicants, who possess an in-built four-year lifespan that prevents them from developing too much emotional intelligence and therefore able to supercede their programming. Dr Eldon Tyrell (Joe Turkell), is sought out by Replicant and 'prodigal son', Roy Batty (Rutger Hauer), requesting more life from his 'father'.

(WARNING: This clip contains violence)

The film is set in 2019 and, as far as public knowledge goes, humanity hasn't come quite as far in developing artificial life as the film depicts but android Sophia, in this clip from Good Morning Britain, comes disconcertingly close (WARNING: This clip contains Piers Morgan).

Far from displaying the violent tendencies of Roy Batty, she even encouragingly hopes (as her programming dictates, naturally) that humans and robots can coexist, potentially working together to create a better society.

At the lecture, I asked Prof Wyatt about computer scientist and author Ray Kurzwiel's theory of the Singularity, the point at which, as Wyatt describes it, 'super-powered computers become very angry' and 'all bets are off' for humanity.

Wyatt, however, said that the 'vast majority of scientists who work in this area believe that (the Singularity) is science fiction', saying that instead, 'we should be very concerned about people using this technology for their own evil purposes.' He cited the internet as an example, that, for all the world-changing good it has done, no one could ever have predicted the bad things that people would use it for, such as cyber-crime, viruses and social media abuse.

In 'Blade Runner', Dr Eldon Tyrell created Replicants to serve humanity. In such films, as in reality, artificial life is initially only as 'good' or 'bad' as the people who use it. Given the example of Sophia, we're a long way from an android killing its own creator, as Roy Batty does (unless someone programs it to do so!) Perhaps, if Tyrell foresaw the potential downside of his creations' emotional development, it's why he built his creations to die sooner rather than later in the first place. Hopefully, if robotics advances to this stage in reality, those responsible will install something similar to sci-fi author Isaac Asimov's Laws of Robotics, that prohibit an artificial being to harm an organic one.

In the real world, AI is present in vehicles, mobile phones and laptops, all of which exist to make life easier for us. Yet, AI is increasingly being used in warfare, such as drones and the development of military robots, as this paper from last year shows.

Humanity is capable of creating (artificial) life, as well as bringing about its own destruction through AI-based technology but how far will playing God take us as a species? Whilst we are able to improve upon and prolong quality of life in this world through advances in medicine, overcoming death altogether is still 'beyond (our) jurisdiction', as it was with Tyrell. That ability still belongs to God and we need the humility to see our own vulnerability in the potential power of our artificial creations, the wisdom to use them for the world's greater good and the faith that God will guide us in how to do this.

Pictured top is Prof John Wyatt speaking at Norwich Anglican Cathedral.